The complete version of Photoshop comes bundled with my Lightroom subscription and it's like being given a fully stocked tool shed with every tool ever invented. Of course actually using Photoshop is like going into that shed and finding a hammer and some nails, maybe a saw, and ignoring all the other tools ever invented. But sometimes you pick up a strange tool and sometimes it actually works intuitively or at the very least does something interesting, so you make a note of it.

I think I came across the Auto-Blend tool in Photoshop when I was playing with averaging multiple images. This is when you stack a bunch of pictures on top of each other and ask the computer to calculate the average colour value for each pixel location. If your images are in any way similar you'll get a ghostly image that looks a bit like all of them. If the images are all different you'll get a grey-brown smudge of nothing. I used it for the 648 MPs piece because it gave the sense of institutional anonymity I was after, but it doesn't really help with my ongoing challenge to see "all the pictures all at once".

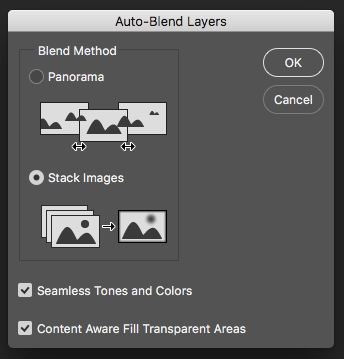

Auto-Blend is in the Edit menu and not particularly hidden away so it was fairly easy to stumble upon. It's designed for two tasks. Panorama Stitching, which most people are familiar with, and Focus Stacking.

Focus stacking is used to get deep depth-of-field when it's physically impossible to do so, usually with close-up or macro photography. Multiple shots are taken with the focal point moving down the subject. These are loaded into layers and the computer is asked to find the sharpest pixels in each area of the canvas. All blurry bits are discarded and the sharp stuff is stitched to make an image with clear focus all the way through. It's like HDR for focus, only not shit. Here's an example nicked off Wikipedia:

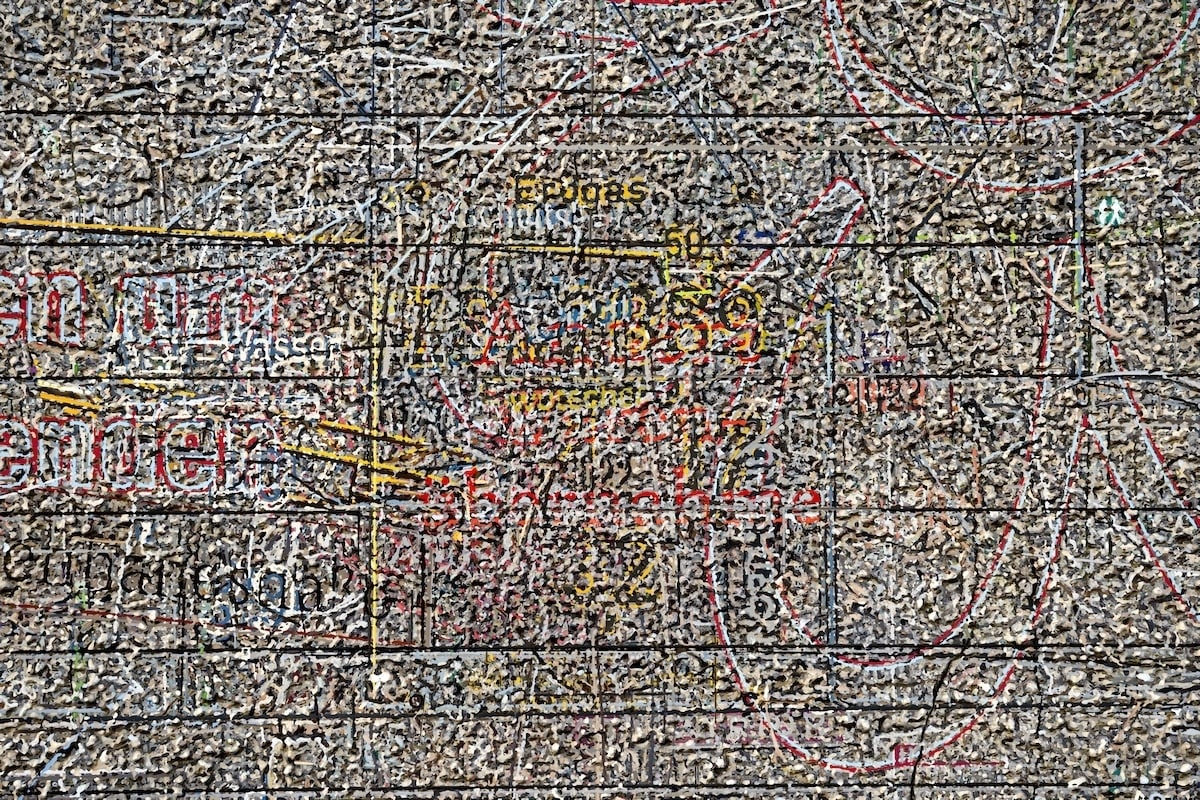

But what happens when you give this tool images that are completely different and ask it to find the sharpest bits? Well, if you give it 80 photos taken during a walk around Linz, Austria, as I did in November, you get this:

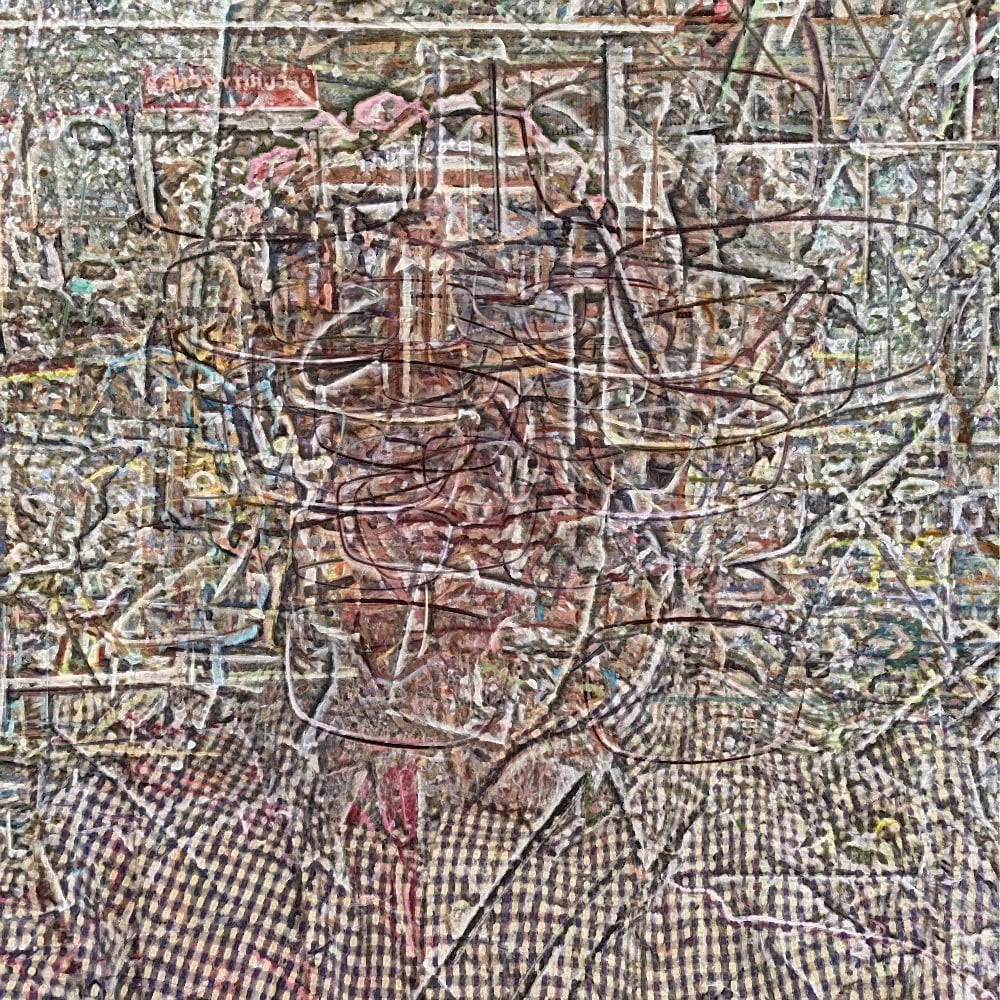

and if you take Time magazines's top 100 photos of 2016 you get this:

Which is... well, it's certainly interesting.

I've gotten slightly hooked on this tool over the last few months, but it's very blunt. I load the pics into Photoshop and press a button which produces an image. The only way I can affect the outcome is by varying the images I put it. The tool is a black box. So I was looking for a way to reverse-engineer it and make something I could tweak and control.

I didn't realise the tool was for focus stacking to begin with. I knew it worked with panoramas, which is kinda like photogrammetry in my mind, but this application didn't feel like that. It felt more like physically squeezing the stack of images in a vice and seeing what details survived. But eventually I read the document I probably should have read at the beginning and learned how focus stacking works.

Unsurprisingly in involves the sort of mathematics that is beyond me, so getting into the guts is going to take a while, but I found a fairly simple Python script which does the most basic kind of focus stacking and, with some help from Oliver, got it working. Here's those Linz photos again.

It doesn't look much like the Photoshop version, which implies Photoshop is doing all sorts of object selection around the areas of sharpness, not to mention the smoothing at the end. So that's interesting.

I played around with this new tool for a few hours and started to get something threatening aesthetic niceness, maybe. This is 36 pictures of me looking at the camera. Spot the glasses.

With this I tried something different. By controlling the size of the areas saved, from 1 pixel to 17 pixels, I made eight versions. I then used the averaging tool to merge them together which gave it this much smoother feel.

One of the reasons for finding a simple command-line tool, rather than Photoshop, was I could batch process properly (Photoshop batch processing is a nightmare, probably because I'm doing something wrong). Here's five David Bowie music videos layered on top of each other.

It looks like a dodgy video effect from the early 80s, but that's okay. The point is I think I understand what's going on.

The next stage, I guess, is to explore how the algorithm chooses the pixels and see if I can't tweak that.

Ever onwards!